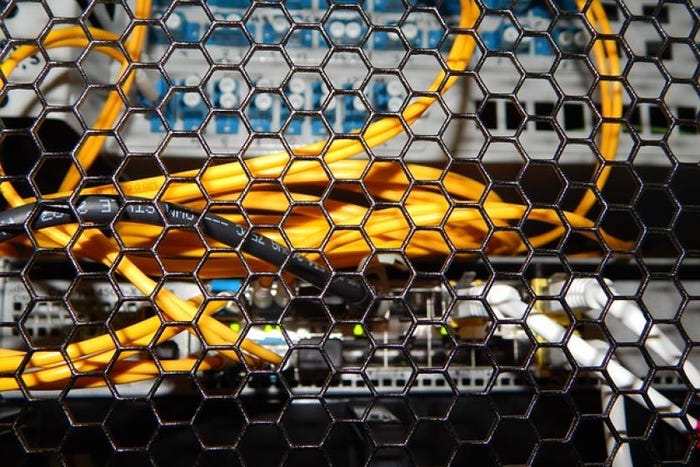

Switches & Routers

Switches and routers are both fundamental networking devices used to connect devices within a network, but they serve different purposes and operate at different layers of the OSI model.

Quality of Service (QoS) in computer networks provides network engineers with the means to prioritize latency-sensitive traffic flows.

Enterprise Connectivity

Quality of Service (QoS) in Computer Networks: Boosting PerformanceQuality of Service (QoS) in Computer Networks: Boosting Performance

Quality of Service (QoS) in computer networks provides network engineers with the means to prioritize latency-sensitive traffic flows. It is increasingly essential as bandwidth consumption soars. Learn more about it here.

SUBSCRIBE TO OUR NEWSLETTER

Stay informed! Sign up to get expert advice and insight delivered direct to your inbox