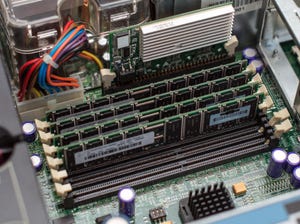

Broadcom launched a robust AI networking architecture, new appliances, and a partner program at its recent Explore event in Barcelona.

Enterprise Connectivity

At VMware Explore Barcelona, VeloCloud Rolls Out Enhancements to Scale AIAt VMware Explore Barcelona, VeloCloud Rolls Out Enhancements to Scale AI

Broadcom launched a robust AI networking architecture, new appliances, and a partner program at its recent Explore event in Barcelona.

SUBSCRIBE TO OUR NEWSLETTER

Stay informed! Sign up to get expert advice and insight delivered direct to your inbox