Data Center Networking

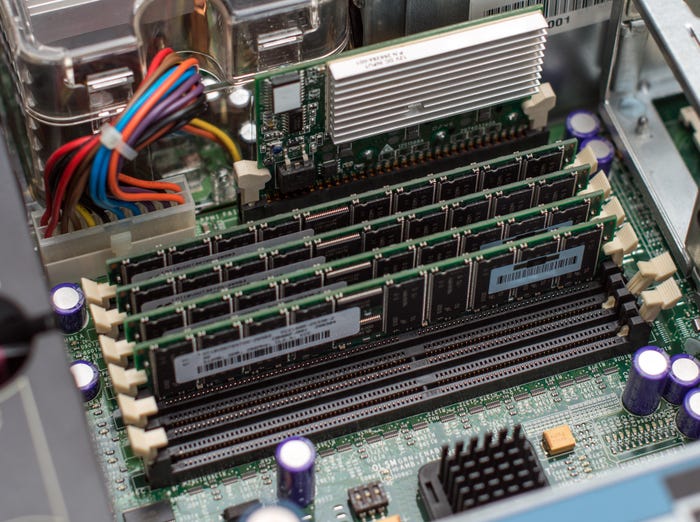

Data center networking is the networking infrastructure and technologies used to interconnect servers, storage systems, and other networking devices within a data center facility.

pile of 2024 election buttons

Network Infrastructure

Election 2024: Downballet Races for IT Pros to Watch TonightElection 2024: Downballet Races for IT Pros to Watch Tonight

These tight contests in Congress, plus one mayoral campaign and a few ballot propositions could make waves in cloud computing, rural broadband, Big Tech oversight, and other issues key to IT pros.

SUBSCRIBE TO OUR NEWSLETTER

Stay informed! Sign up to get expert advice and insight delivered direct to your inbox