Hyperscale Invades The Enterprise

Enterprise datacenters facing increasing demands, typically with shrinking budgets, should take advice from the Web giants and move into hyperscale.

January 13, 2014

These days, the only constant in technology is that the pace of change will continue to increase. One group of Web-based companies has built its infrastructure to handle constant growth and global scale from the start.

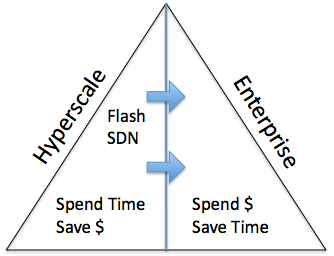

Yahoo, Amazon, Google, Facebook, and other companies like them would be out of business if they had the operational costs of typical datacenters. These hyperscale companies experience IT growth that is orders of magnitude larger than a typical Fortune 500 company. Hyperscale companies can deploy a team of PhDs to come up with a design that will save their companies money, while enterprise companies will spend money to have fully baked solutions that will save their staffs time.

The hyperscale companies exemplify where IT is headed. CIOs need to consider using services built by hyperscale companies. They also must determine how their technology consumption and best-practices can be adopted into enterprise datacenters.

The hyperscale companies exemplify where IT is headed. CIOs need to consider using services built by hyperscale companies. They also must determine how their technology consumption and best-practices can be adopted into enterprise datacenters.

First, an enterprise must ask themselves if having its own datacenter and infrastructure makes strategic sense. This is not to say that every company should move all applications to the public cloud tomorrow, but they should judge which applications should be kept in-house, versus in a public cloud, or consumed as a service.

Amazon Web Services (AWS) is the leading Infrastructure as a Service (IaaS) provider. The primary use cases for AWS are development and test environments, and for modern applications (such as mobile applications) that can take advantage of the elasticity and scalability of cloud. We can expect to see more applications move to public cloud environments over time, as we have with the adoption of other disruptive technologies such as Windows x86 and server virtualization.

Most companies today choose hybrid cloud, based on a combination of economic, security, and operational decisions. Note that this is typically a mix of public and private with few applications that are truly federated. Enterprise IT can learn a great deal from hyperscale companies when it comes to improving the infrastructure and operational costs of a hybrid cloud.

Facebook launched the Open Compute Project (OCP) to show how to a build scalable architecture that is software-led on top of commodity hardware. While the idea of commodity hardware sounds appealing, most enterprises cannot take advantage of it yet. Suppliers are not set up to do small deals, and IT departments lack the expertise.

Enterprise IT needs software-led architectures that are low-cost, simple to use, and easy to grow without staffing a team of PhDs to design or maintain them. Server SAN is an example of this defined by Wikibon. This architecture sits at the intersection of hyperscale, converged architecture, flash storage, and software-defined datacenter trends.

While commodity hardware will take time to infiltrate the enterprise, the lessons of hyperscale operations are already making an impact on converged infrastructure. Converged infrastructure simplifies deployment and maintenance, which targets the high operational overhead of traditional infrastructure. The application is still critically important, however. Value increases exponentially the further a solution can be integrated up the stack, according to Wikibon research.

The biggest challenge for the adoption of hyperscale technology is that it modifies the roles and skills needed in IT. While the choices and changes are difficult, it is imperative that CIOs embrace the opportunities or be left behind by competition that does.

Stuart Miniman is principal research contributor at Wikibon and is an active member of the networking, virtualization, and cloud communities.

About the Author

You May Also Like