When VMware announced the general availability of VSAN in March, it reported some frankly astounding performance numbers, claiming 2 million IOPS in a 100 percent read benchmark. Wade Holmes, senior technical marketing architect at VMware, revealed the details of VMware's testing in a blog post. Since, as I wrote recently, details matter when reading benchmarks, I figured I’d dissect Wade’s post and show why customers shouldn’t expect their VSAN clusters to deliver 2 million IOPS with their applications.

The hardware

In truth, VMware’s hardware configuration was in line with what I expect many VSAN customers to use. The company used 32 Dell R720 2u servers with the following configuration:

- 2x Intel Xeon CPU E5-2650 v2 processors, each with 8 cores @ 2.6 GHz

- 128 GB DDR3 Memory.

- 8x 16 GB DIMMs @ 1,833 MHz

- Intel X520 10 Gbit/s NICs

- LSI 9207-8i SAS HBA

- 1x 400GB Intel S3700 SSD

- 7x 1.1TB 10K RPM SAS hard drives

I tried to price this configuration on the Dell site, but Dell doesn’t offer the LSI card, 1,833 MHz RAM, or the Intel DC S3700 SSD. Using 1,600 MHz RAM and 1.2 TB 10K SAS drives, the server priced out at $11,315 or so. Add in the HBA, an Intel SSD that’s currently $900 at NewEgg, and some sort of boot device, and we’re in the $12,500 range.

When I commented on the testing on Twitter, some VMware fanboys responded that they could have built an even faster configuration with a PCIe SSD and 15 K RPM drives. As I'll explain later, the 15 K drives probably wouldn’t affect the results, but substituting a 785 GB Fusion-IO ioDrive2 for the Intel SSD could have boosted performance. Of course, it would have also boosted the price by about $8,000. Frankly, if I were buying my kit from Dell, I would have chosen its NVMe SSD for another $1,000.

Incremental cost in this config

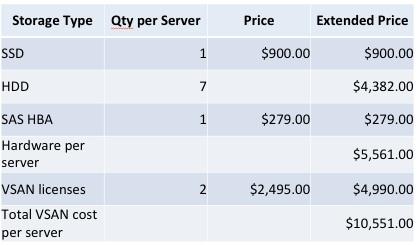

Before we move on to the performance, we should take a look at how much using the server for storage would add to the cost of those servers:

Since VSAN will use host CPU and memory -- less than 10 percent, VMware says -- this cluster will run about the same number of VMs as a cluster of 30 servers that were accessing external storage. Assuming that external storage is connected via 10 Gbit/s Ethernet, iSCSI, or NFS, those 30 servers would cost around $208,000. The VSAN cluster, by comparison, would cost $559,680, making the effective cost of VSAN around $350,000. I thought about including one of the RAM DIMMs in the incremental cost of VSAN and decided that since these servers use eight DIMMs per channel, that would be a foolish configuration.

That $350,000 will give you 246 TB of raw capacity. Since VSAN uses n-way replication for data protection, using two total copies of the data -- which VMware calls failures to tolerate=1 -- would yield 123 TB of useable space. As a paranoid storage guy, I don’t trust two-way replication as my only data protection and would use failures to tolerate=2 or three total copies for 82 TB of space. You’ll also have 12.8 GB of SSDs to use as a read cache and write buffer (essentially a write-back cache).

So the VSAN configuration comes in at about $4/GB, which actually isn’t that bad.

The 100 percent read benchmark

As most of you are probably aware, 100 percent read benchmarks are how storage vendors make flash-based systems look their best. While there are some real-world applications that are 90 percent or more reads, they are usually things like video streaming or websites, where the ability to stream large files is more important than random 4K IOPS.

For its 100 percent read benchmark, VMware set up one VM per host with each VM’s copy of IOmeter doing 4 KB I/Os to eight separate virtual disks of 8 GB each. Sure, those I/Os were 80 percent random, but with a test dataset of 64 GB on a host with a 400 GB SSD, all the I/Os will be to the SSD, and since SSDs can perform random I/O as fast as they can sequential, that’s not as impressive as it seems.

Why you should ignore 2 million IOPS

Sure, VMware coaxed two million IOPs out of VSAN, but it used 2 TB of the system’s 259 TB of storage to do it. No one would build a system this big for a workload that small, and by keeping the dataset size so small compared to the SSDs, VMware made sure the disks weren’t going to be used at all...