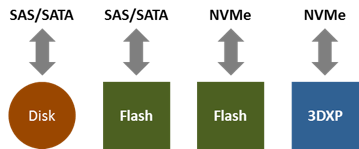

NVMe is a relatively new protocol for accessing data stored on solid-state drives. Unlike spinning disks, SSDs store data on some form of non-volatile memory (NVM). This NVM can be either flash (NAND) or a next-generation NVM such as 3D XPoint (3DXP). Note that NVMe, the protocol, is different from NVM, the storage medium.

NVMe (the "e" stands for express) is designed to be leaner and faster than its predecessors, SAS and SATA. It shaves off about 20us from the latency added by the I/O stack. This improvement is negligible compared to the internal latency of a spinning disk (5000us), but it is noticeable compared to the internal latency of a flash SSD (100us), and it would be dramatic compared to the internal latency of a future SSD with 3DXP (less than 10us). So, while flash SSDs are available with SAS/SATA or NVMe interfaces, 3DXP SSDs will be available with NVMe only.

Besides improving latency, NVMe improves the bandwidth to each SSD. It connects the CPU to the SSDs directly over PCIe, which means there is no need for an intervening HBA, and a greater number of PCIe lanes can be employed. A SAS lane runs at 12Gb/s, which shrinks to about 1GB/s after overheads. A SATA lane supports half of that. A PCIe lane runs at 1GB/s, and a typical NVMe SSD can be connected to four such lanes, supporting up to 4GB/s. Indeed, NVMe enthusiasts are quick to compare a SATA SSD running at 0.5GB/s and an NVMe SSD running at 3GB/s. That’s 6x higher throughput!

But a storage system contains multiple SSDs, typically more than 10. With so many SSDs, drive-level throughput is rarely the bottleneck or determinant of system-level throughput.

System-level performance

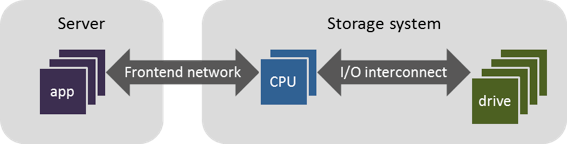

In general, the performance of a storage system is bound by one of the following resources:

- The front-end network connecting applications to storage.

- The CPUs running the storage software.

- The I/O interconnect between the CPUs and storage drives or modules. For a system using SAS/SATA drives, this includes PCIe lanes, a SAS HBA, SAS lanes, and perhaps a SAS expander. The total bandwidth of such an interconnect is generally 4-12GB/s. For a system using NVMe, the interconnect includes PCIe lanes and perhaps a PCIe switch. The total bandwidth of this interconnect is generally 8-24GB/s.

- The storage drives, including the storage medium and the medium controllers.

Which of these four becomes the performance bottleneck depends on the system architecture and the workload such as reads vs. writes and random vs. sequential.

Traditional storage systems using disk drives are generally drive bound. However, modern systems using flash drives behave quite differently, because flash drives are much faster than disk drives. For most workloads, flash-based systems are CPU bound. Most of the CPU is consumed in providing data services such as high availability, data reduction, and data protection.

Less common, a flash-based system might be drive bound. This could happen if the system has a small number of SSDs, if it does not distribute the load across the drives, or if it uses older drives that cannot fully utilize the SAS/SATA interface. Even less common, the system might be bound by the interconnect or the front-end network. This could happen for selected workloads, e.g., bursts of sequential I/O using large IO size. Or, it could happen if the storage system is designed to provide raw performance at the expense of sophisticated data services.

When a system is CPU bound, the use of NVMe instead of SAS/SATA still might improve performance because the NVMe driver is more CPU efficient than the SCSI driver. But this gain is modest—less than 20%—because most of the CPU is consumed by data services, not protocol drivers.

Your mileage might vary, and you should ask any storage vendor offering NVMe about what performance gain you should expect on your workload, not their benchmarks.

Fortunately, NVMe can be incorporated into a storage system with straightforward changes in the interconnect layout, without a major change to the storage architecture at large. There is one hitch: NVMe SSDs with dual ports are expensive. But their price is likely to drop to near that of SATA SSDs. So, over time, all flash-based systems will adopt NVMe. Some might adopt it sooner than the others, but it is not a fundamental differentiator.

Overall, using NVMe SSDs in a storage system is like using “high-performance tires” on a car. In most cases, they provide a modest gain in performance and do not require a change to the engine. Nice to have, but not a fundamental differentiator.

Perhaps of greater interest is a recent extension of NVMe known as NVMe over Fabrics (NVMf). NVMf executes I/O across hosts using RDMA-capable networks such as RoCE. While NVMe over PCIe shaves off about 10us relative to SAS, NVMf can shave off about 100us from the roundtrip latency between two hosts relative to protocols such as iSCSI. It also saves CPU usage from TCP/IP processing. This can be particularly beneficial in scale-out systems for transferring data between hosts. It does require RDMA-capable NICs and DCB-capable switches, so it will take some time for mass adoption.

3D XPoint SSDs

While NVMe is nice to have for flash SSDs, it's critical for 3DXP SSDs.

This is not surprising given that Intel, which led the release of NVMe in 2011, is also the co-creator of 3DXP. The internal latency for 3DXP SSDs is less than 10us, which is far quicker than 100us for flash SSDs. This means that workloads with low queue depth -- with few IOs outstanding at any time -- will run much faster on 3DXP SSDs than they would on flash SSDs. If one were to use a 3DXP SSD with SAS instead of NVMe, it would more than triple the latency and take a big bite out of the lure of 3DXP.

With access latency of 10us, 3DXP is a more fundamental change than NVMe alone. It introduces a new and differentiated layer in the storage media pyramid—between flash and NVRAM (based on DRAM).

Relative to a flash SSD, a 3DXP SSD will be 10x faster at low queue depth, 10x more endurant in number of writes, but also 10x more expensive per gigabyte. Given the 10x difference in price and performance, it would be beneficial to combine flash and 3DXP SSDs such that flash is used for storing data and 3DXP is used for storing metadata or caching data. This will make hybrid flash+3DXP systems more attractive than pure flash systems.

Relative to an NVRAM DIMM, a 3DXP SSD is more than 10x slower, far less endurant, and 10x cheaper. Therefore, use cases such as write caching that are the most sensitive to latency and endurance but do not need as large capacity will continue to function optimally by using NVRAM.

Eventually, the full potential of 3DXP also will be realized not as SSDs using NVMe, but as NVDIMMs on the memory channel. This is because the true latency of 3DXP memory is claimed to be less than 1us, and wrapping it up into an SSD appears to increase the latency to 10us.

And so the tick-tock progression of storage protocols and storage media will continue. A new storage protocol is a modest tick. A new storage medium is a tock!

Umesh Maheshwari, Nimble Storage founder and CTO, is responsible for defining the company’s product architecture and developing core technologies. Before founding Nimble, he served as an early architect at Data Domain where he developed parts of their deduplicating file system and WAN-efficient replication. Previously, Umesh was at Zambeel, a maker of scalable file servers, where he developed a clusterized metadata service and automatic network configuration. Prior to Zambeel, he was at InterTrust. Umesh holds a PhD in computer science from MIT. He also holds a BTech in computer science from IIT Delhi, where he received the President’s Gold Medal as the top graduating student.