As I leaf through the pages of my cloud scrapbook, I'm struck by how much valuable ink has been wasted repeating charges about cloud computing that just aren't true. Each year, sure as clockwork, Oracle CEO Larry Ellison tries to come up with a new putdownof the multi-tenant cloud during Oracle OpenWorld, like throwing mud against the wall to see if it sticks. Each year, the results are the same -- splat and slide, gravity beats FUD.

Let's start with security myths. I've long been interested in the supposed lack of security in the cloud and concluded that cloud operations are more secure than those of the average data center. That doesn't mean there aren't a lot of gaps and loopholes when it comes to sending your data over the Internet to cloud servers; any exposure to the public Internet contains its own hazards.

Data movement to the cloudis not a layup. But the standard of operations at Amazon Web Services, Terremark, Rackspace, Savvis and others is high enough that you can be assured of best practices on a more consistent basis than in many enterprise data centers. These centers are also going to be irresistible targets, and eventually an isolated breach will occur. Achieving security in the cloud is a journey that's just gotten underway.

I've also heard many critics say there's no definition of cloud computing, because there isn't really anything new to define. In fact, there is the NIST definition, but I'm inclined to say it's more description than definition.

The heart of cloud computing revolves around a new pattern of distributing computing power, not a new technology. In this new pattern, the end user has much more control than he used to over a powerful, remote server owned by somebody else. That control can extend up to the point where he achieves programmatic control over the server, if desired. Getting that control while engaging in one of the lowest-cost forms of computing is the heart of the cloud, an emerging relationship between the end user and publicly accessible data services.

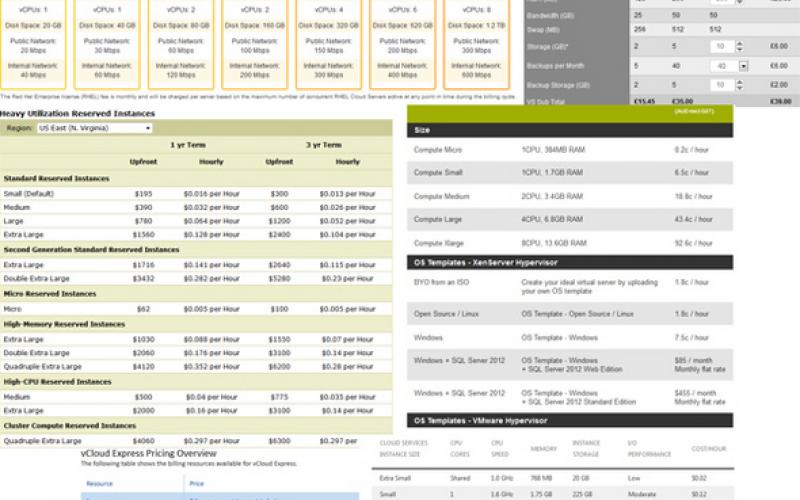

The myths that are most difficult to bust are the ones involving cloud costs. There are many circumstances where monitoring cloud usage gets away from IT managers. They lose track of what employees have spun up; at the end of the month IT is presented with a big, surprising bill.

Before any cost comparison can be made, the cloud customer needs to know what specific operations in his own data center cost--a major research project. Some IT organizations do not have a true measure of total data center cost.

Explore my list of the top seven cloud myths that continue to bedevil prospective cloud users. Then weigh in with your opinion by leaving a comment.